Split Testing - How to A/B Test Your Product Page

Listing optimization is an important part of selling a product online. A/B and split testing provide tools to develop the content of your product page and help sell more units. (5 min read)

Listing optimization underpins the success of any product online. How a shopper sees our product in search results, and on our product page, plays a lead role in the act of converting a sale.

The best way to understand how shoppers see our product is by interpreting the orders they place as affirmative votes in a poll asking 'Would you buy this product?' — far more effective than asking friends and family or surveying customers; actions speak louder than words.

Both A/B and split testing allow us to develop our content strategy upon this premise. With them, we can conduct on-going and iterative experiments by making small, isolated changes to our product page, then comparing and building on the results.

What is an A/B Test

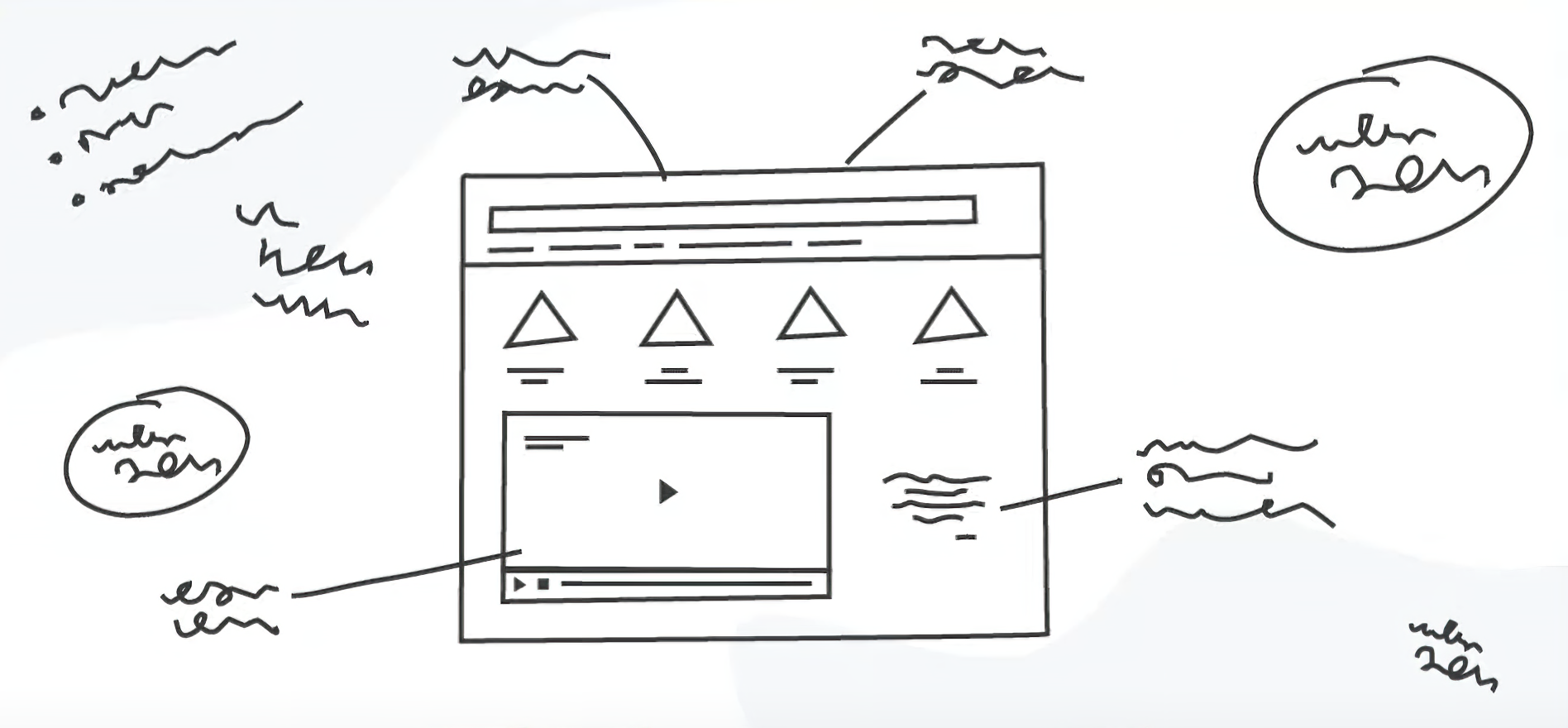

A/B testing and split testing are experimental ways of improving our conversions. The process involves publishing two variants of our product page and presenting the variants to different visitors to compare which converts better.

A/B differs from split testing in the way we present A or B to visitors. A/B runs the variants side-by-side, rather than splitting the traffic between the two equally.

For each experiment we make a particular change to our page content, such as the title or primary image. When we've obtained a meaningful sample size, we can compare our business metrics (sessions and sales) to infer a result.

Why You Should A/B Test

How do you currently decide on the content of your listing? Common ways include —

- Following what has worked for others

- Repeating what broadly worked for you in the past

- Your own intuition

- The ideas of marketing or design professionals

All of the above are based on what may have converted in the past. While these serve as a good starting point, we can never truly know how shoppers will respond to our listing without testing and iteration.

A/B testing as a practice allows sellers to make data-driven changes to their product page. Continuing this way can have a profound and compounding effect on your revenue over the course of a year or more.

Thinking of B Variables

Before testing you should form a hypothesis on how you will improve your conversion rate. The more evidence to support this hypothesis the better. Ways to find room for improvement could be —

- Compare your page to competitors in your category or across relevant search terms

- Look for common themes in your reviews, questions and feedback

- Review voice of the customer performance for customer return reasons, your product page could be inaccurate or incomplete

- Judge based on your market understanding

- Survey your existing customers to compare what their first impressions are of your listing

After collecting evidence you can form a hypothesis and create a testable variation of your product page that addresses the issues. Amazon provide some good ideas for variables to change. A good hypothesis should follow the format of —

Changing [X] into [Y] will [Z]

The B variant should change only one element of the page yet be different enough to evoke a meaningful outcome after the test period.

Method 1 — Manage Your Experiments

Fortunately for Amazon sellers, Amazon recently introduced Manage Your Experiments. The feature allows brand owners to run split tests on the content of their product page for a fixed duration.

Experiment results are updated weekly for the duration of the experiment. When an experiment has concluded, results detail the performance of each variant including conversion rate, units sold per unique visitor and sample size. A probability distribution is also provided, with a one-year projected impact of the change.

The feature integrates well into the customer experience. Both A and B variants of the page are active during the experiment, but seen by different customers. The experiments, therefore, account for seasonality and other external factors as the impact will be seen across both variants. Testing this way has the added benefit of not affecting your search rank.

As of the 5th of October 2021 — Experiments are only available to brand owners. If you sell on Amazon but have not yet registered your brand, visit Amazon Brand Services page to enroll. Otherwise, continue to method 2.

Method 2 — Manual

Manually A/B testing is a good alternative for sellers without a registered brand on Amazon. There's more overhead to the method but it's possible to achieve a similar result. To get started —

- Choose a starting point when the prior 4-10 week period had, and the future 4-10 week period will have, typical sales activity

- Update your listing to the B variant

- After 4-10 weeks, review the product page sales and traffic for period A and period B by visiting business reports in seller central

- Compare the sessions, views, units sold and unit session percentage between the two periods

We are forced to run the variants contiguously in time rather than simultaneously, therefore the method does not account for external factors that may impact sales. Be consistent and test over a larger time frame if possible.

Additionally, we do not have the probability distribution the first method provides, but we can breakdown and infer information from changes to our page views, unique sessions, units sold and unit session percentage. If my unique sessions increased, that would suggest the primary image change I made stood out against the competition. If my unit session percentage increased, that would suggest the title change I made appealed to attributes of interest to my customers.

Are These Results Reliable

A random sample of data will never be an absolute representation of shoppers, however you can get close. For greater accuracy know that 1) accuracy of your result increases with sample size (session count) and 2) margin of error decreases with population (the number of potential shoppers for your category of product).

For those that would like to learn more, Slovin’s Formula provides an idea of the sample size you should be looking for depending on your target margin of error and level of confidence. Water bottles on Amazon’s US store generate an estimated monthly search volume of 386,527 —

Sellers in this category would need 1841 sessions, given a 3% margin of error, to have 99% confidence that their product listing performance that month was representative

Use a sample size calculator to figure out your ideal sample size. Please note that this is only a guideline.

Summary

A/B and split testing can be used to develop our content strategy and improve our units sold. To get more out of our experiments, it's necessary to be methodical and understand what constitutes meaningful data.

It is equally important to account for inconclusive results. Our sample size may be too small to confidently nominate a winner. Our A and B variants may be too similar to affect shopper behaviour. It could be that both variants performed equally well, or perhaps customers simply do not care about the control element.

For the best outcome, sellers should be continually testing different elements of their product page. Lastly, remember to keep testing over different holidays and seasons - it will break down why customers buy your product at different times of the calendar year.